Systems

Featured matches

-

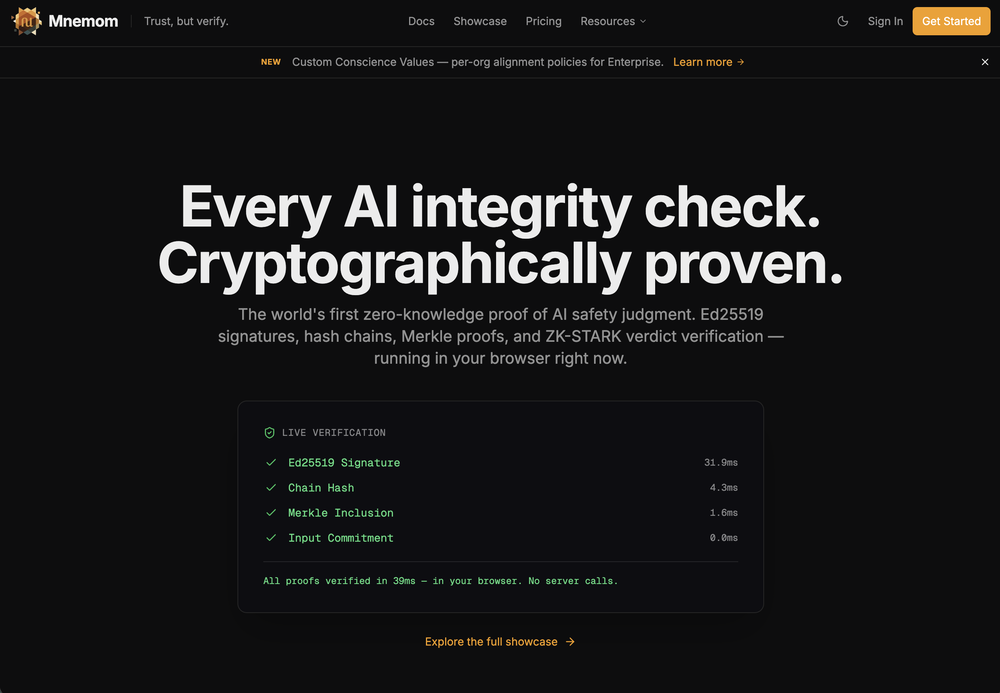

Trust scores, cryptographic proof, and risk assessment for AI agents.Open

Trust scores, cryptographic proof, and risk assessment for AI agents.Open

Alex Garden🛠️ 1 toolFeb 24, 2026@MnemomWe built Mnemom because we were shipping AI agents into production and couldn't answer a basic question: how do you prove this thing is doing what you told it to? Not monitor. Prove. So we built the proof layer. Cryptographic attestations, trust scores, risk assessment, containment — everything you need to deploy agents you can actually stand behind. It's free to start and open source. We'd love to hear what you think - new features shipping daily.

Alex Garden🛠️ 1 toolFeb 24, 2026@MnemomWe built Mnemom because we were shipping AI agents into production and couldn't answer a basic question: how do you prove this thing is doing what you told it to? Not monitor. Prove. So we built the proof layer. Cryptographic attestations, trust scores, risk assessment, containment — everything you need to deploy agents you can actually stand behind. It's free to start and open source. We'd love to hear what you think - new features shipping daily. -

Really smooth experience with Vivgrid, makes taking agents to production way easier.

Really smooth experience with Vivgrid, makes taking agents to production way easier. -

-

-

Verified tools

-

Great tool! Loved that it asked questions before designing.

- Sponsor

Base44 Superagents-AI agent that does it all

-

We built ModelRed because most teams don't test AI products for security vulnerabilities until something breaks. ModelRed continuously tests LLMs for prompt injections, data leaks, and exploits. Works with any provider and integrates into CI/CD. Happy to answer questions about AI security!

-

Private Q&A with your Documents on Windows or Mac.Open

Private Q&A with your Documents on Windows or Mac.Open