What type of visibility does Lexi provide for the cost breakdown?

Lexi provides full visibility of the cost breakdown in the response headers. It shows savings, margin, and balance.

Does Lexi hold my AI provider's keys?

No, Lexi doesn't hold your AI provider's keys. They are sent directly to the designated AI provider.

What types of integrations are supported by Lexi?

Lexi supports various integrations, including streaming, tool calls, and structured output.

Can any changes occur in my operational stack while using Lexi?

No, when using Lexi, nothing else in your operational stack changes.

How do I start using Lexi?

To start using Lexi, you just need to modify one URL in your configuration.

How does Lexi's API key work?

Lexi's API key is designed to be flexible and can work with different AI providers. It is integrated into the workflow by replacing the baseURL in the developer's code with the Lexi API URL.

Is Lexi free to start with?

Yes, Lexi is free to start with. It even provides $10 free without needing a card.

How does Lexi maintain coherent AI conversations?

Lexi maintains coherent AI conversations by providing a solution that prevents AI from losing context in the middle of long discussions.

How long does it take to configure Lexi?

It takes approximately two minutes to configure Lexi.

What information can I find in Lexi's response headers?

In the response headers, you can find information such as the request cost, savings, remaining balance, original tokens, compressed tokens, compression ratio, and Lexi's margin.

Can I pay more than going direct while using Lexi?

No, you can't pay more than going direct while using Lexi. If there's no saving, there's no Lexi fee.

What kind of URL modification does Lexi require?

Lexi requires changing one URL in your configuration to start reducing your AI request costs.

How does Lexi play a role in AI budgeting?

Lexi contributes to AI budgeting by significantly reducing AI request costs. Its billing system charges based on the savings made.

Does Lexi work with already paid AI providers?

Yes, Lexi works with AI providers that users are already paying for.

What is Lexi?

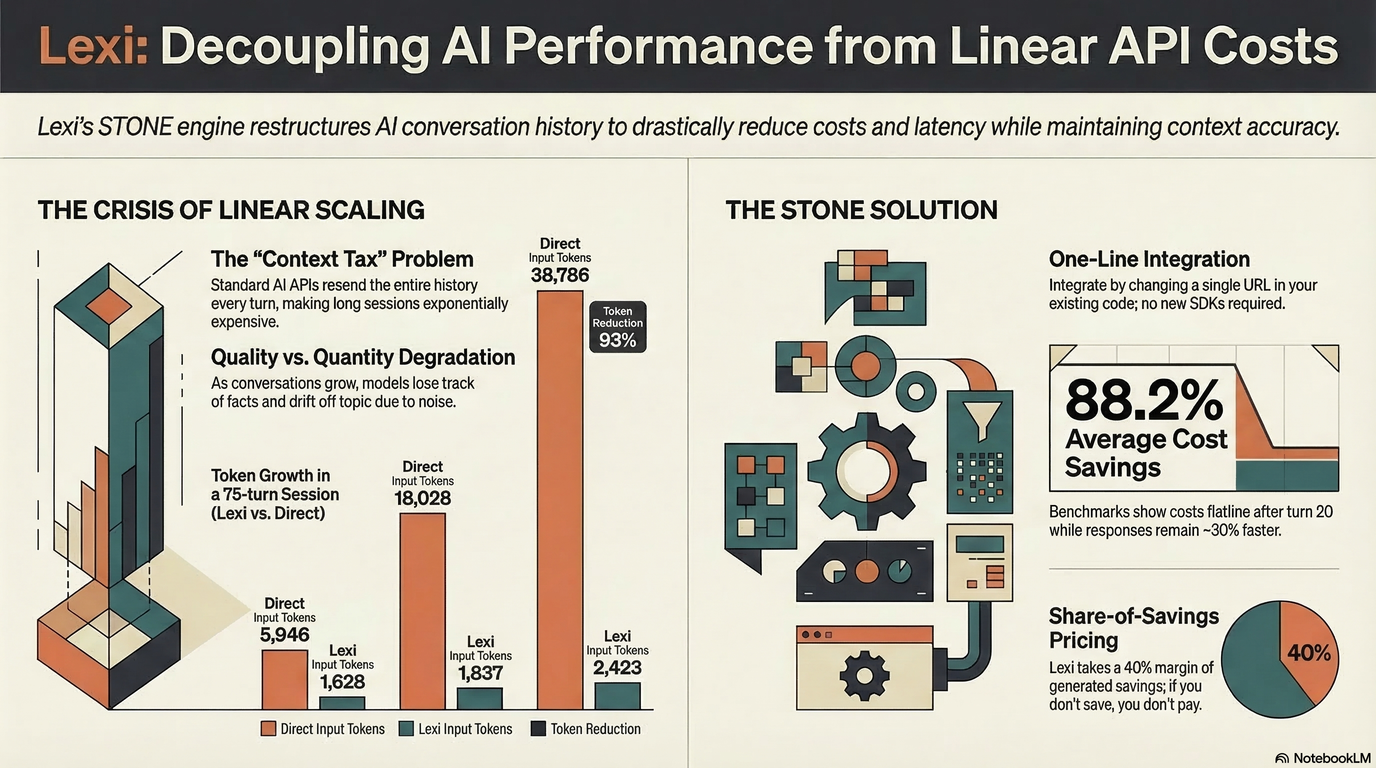

Lexi is a tool that helps to lower the costs of Application Programming Interface (API) usage for Artificial Intelligence (AI) applications. It achieves this by adapting your existing API services from different AI providers. It enables users to realize cost savings on each request, charging a share of the savings it makes, while ensuring the quality of long AI conversations does not degrade over time.

What AI providers does Lexi support?

Lexi supports API services from major AI providers, including OpenAI, Anthropic, Google, xAI, DeepSeek, and Meta.

How do I integrate Lexi into my existing application?

Integrating Lexi is as simple as swapping your provider's base URL for the Lexi endpoint in your application configuration settings. You should combine your Lexi API key with your existing provider key to get it working just as before.

How does Lexi handle API key security?

Lexi prioritizes security by ensuring API keys go directly to their respective AI providers with each request. Lexi never stores these keys, safeguarding the privacy and security of your service.

What's the cost of using Lexi and how is it billed?

Using Lexi costs less than going directly to the API providers. Lexi charges 40% of the savings made from their intervention to your prepaid balance. The remaining 60% of the savings is retained by the user. Complete cost transparency is provided by Lexi via HTTP headers in each response, showing the exact cost breakdown, savings, margin, and remaining balance.

What savings can I expect from using Lexi?

The savings you can expect from using Lexi depend on the cost of your current API services. Lexi reduces the cost by charging you 40% of the savings made on each request, ensuring that 60% of the savings is always retained by you. Hence, the savings are substantial and grow with the cost and volume of API calls.

What happens when there's no saving on a request made via Lexi?

When there's no saving on a request made via Lexi, the user is not charged a Lexi fee. Essentially, using Lexi will never make users pay more than what they would by going direct to the API provider.

How does Lexi maintain the coherence of long AI conversations?

Lexi has a computational mechanism that helps to maintain the coherence of long AI conversations. So, instead of the conversation degrading over time, as is common with many AI models, Lexi ensures it remains coherent for a much longer period.

How many models can I access through Lexi?

Lexi provides access to 28 models across 6 different AI providers. This gives users a wide range of models to choose from, depending on their specific needs and preferences.

Is there a trial period for Lexi?

Lexi offers a trial period with a $10 free credit to start with. No credit card information is required to avail of this trial period.

What changes are required to start using Lexi?

The only change required to start using Lexi is changing one URL in your application configuration settings. Specifically, you need to swap your current provider's base URL for the Lexi endpoint.

Why is Lexi a good choice for API management and cost reduction?

Lexi is an optimal choice for API management and cost reduction due to its effectiveness in lowering the costs of API usage. It provides a simple integration process, maintains a high level of security for API keys, ensures ongoing coherence in long AI conversations, and provides complete cost transparency. Lexi’s fees are also solely based on the savings made on each request, safeguarding the users from additional charges.

How does Lexi provide transparency on costs and savings?

Lexi provides cost transparency by including HTTP headers in every API response, which carry the exact cost breakdown — savings, margin, and remaining balance. This gives you a detailed view of what you saved and what was charged.

What is structured output in Lexi?

Structured output in Lexi refers to the organized and formatted responses returned by Lexi that include the data requested along with detailed information about the cost breakdown, savings, and balance.

What functions can be called in Lexi?

All the API functions supported by your original AI provider are also supported by Lexi – this includes streaming, tool calls, and other function calling.

What type of data can Lexi help me intercept, log and display?

Lexi can help intercept, log and display the response headers in each API call. These headers carry detailed information about the request cost, savings, your remaining balance and more.

How is Lexi adaptation different from standard API usage?

Lexi adaptation brings extra value on top of standard API usage by decreasing cost, improving conversation coherency, and providing cost transparency without disrupting your workflow or stack. One URL reconfiguration is all it takes to benefit from these advantages.

What kind of API documentation does Lexi provide?

Lexi provides comprehensive API documentation. It includes detailed descriptions of how to get the service integrated into your existing application, information on function calling, and instructions on how to use its unique features.

What is meant by Lexi's 'rapid deployment'?

'Rapid deployment' in the context of Lexi implies that the tool is designed to be fully operational in only two minutes or less, requiring minimal changes to your current stack.

Can I use the same endpoint for different AI provider keys in Lexi?

Yes, the same Lexi endpoint can serve different AI provider keys. You just need to adjust the provider key in your configuration and can then connect to the corresponding AI provider.

KiloClaw - Managed 🦀

KiloClaw - Managed 🦀

How would you rate Lexi?

Help other people by letting them know if this AI was useful.